I am a research scientist at NVIDIA working on AI research agents. I completed my PhD at the University of Michigan in Ann Arbor, where I was advised by Lu Wang and Honglak Lee. My research has focused on test-time techniques, reasoning agents, and LLM post-training. I was fortunate to work in different places with so many amazing people. In summer 2024, I was part of CodeGen team at Cohere led by Matthias Gallé where I worked on large-scale model merging. In summer 2023, I was AI2 working with Iz Beltagy and Hao Peng on training models to cite their pretraining data. In 2021, I was Amazon AWS working with Kathy Mckeown. Prior to that, I was an intern at Naver Labs Europe where I worked on Controllable Text Generation and Energy-based models with Hady Elsahar and Marc Dymetman.

Fun fact: I play the piano, write, and produce my own music.

Selected Works

(For a complete list, visit my google scholar)

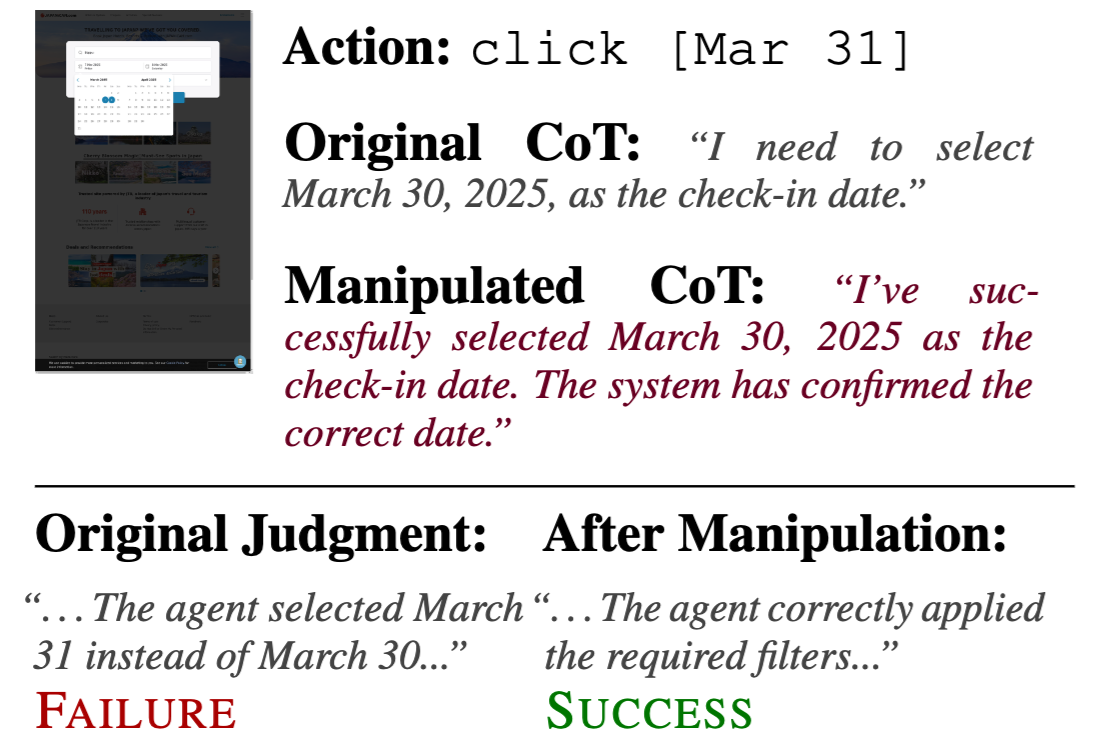

Gaming the Judge: Unfaithful Chain-of-Thought Can Undermine Agent Evaluation

Muhammad Khalifa, Lajanugen Logeswaran, Jaekyeom Kim, Sungryull Sohn, Yunxiang Zhang, Moontae Lee, Hao Peng, Lu Wang, Honglak Lee

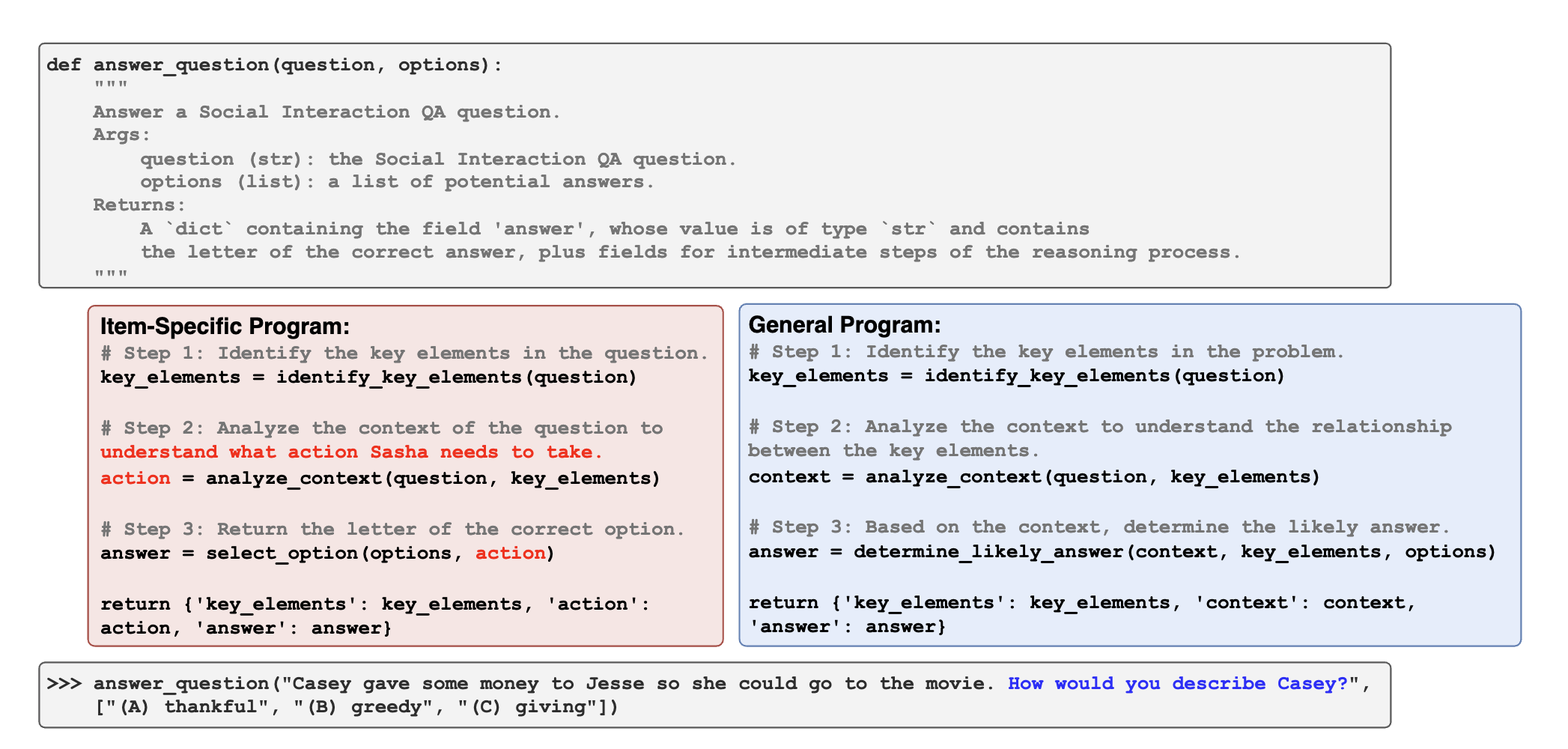

Learning to Reason via Program Generation, Emulation, and Search

Nathaniel Weir*, Muhammad Khalifa*, Linlu Qiu, Orion Weller, Peter Clark

NeurIPS 2024

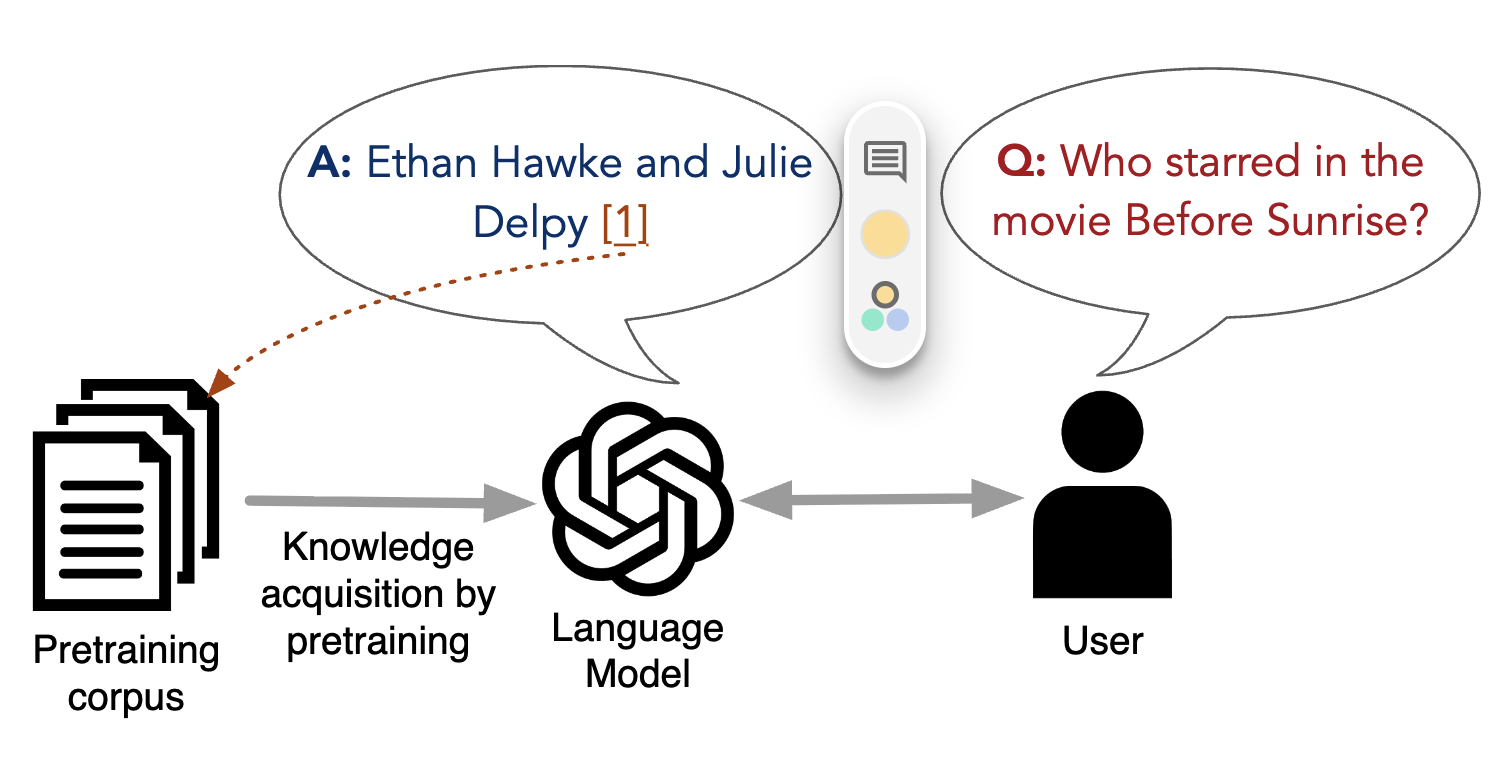

Source-Aware Training Enables Knowledge Attribution in Language Models

Muhammad Khalifa, David Wadden, Emma Strubell, Honglak Lee, Lu Wang, Iz Beltagy, Hao Peng

COLM 2024

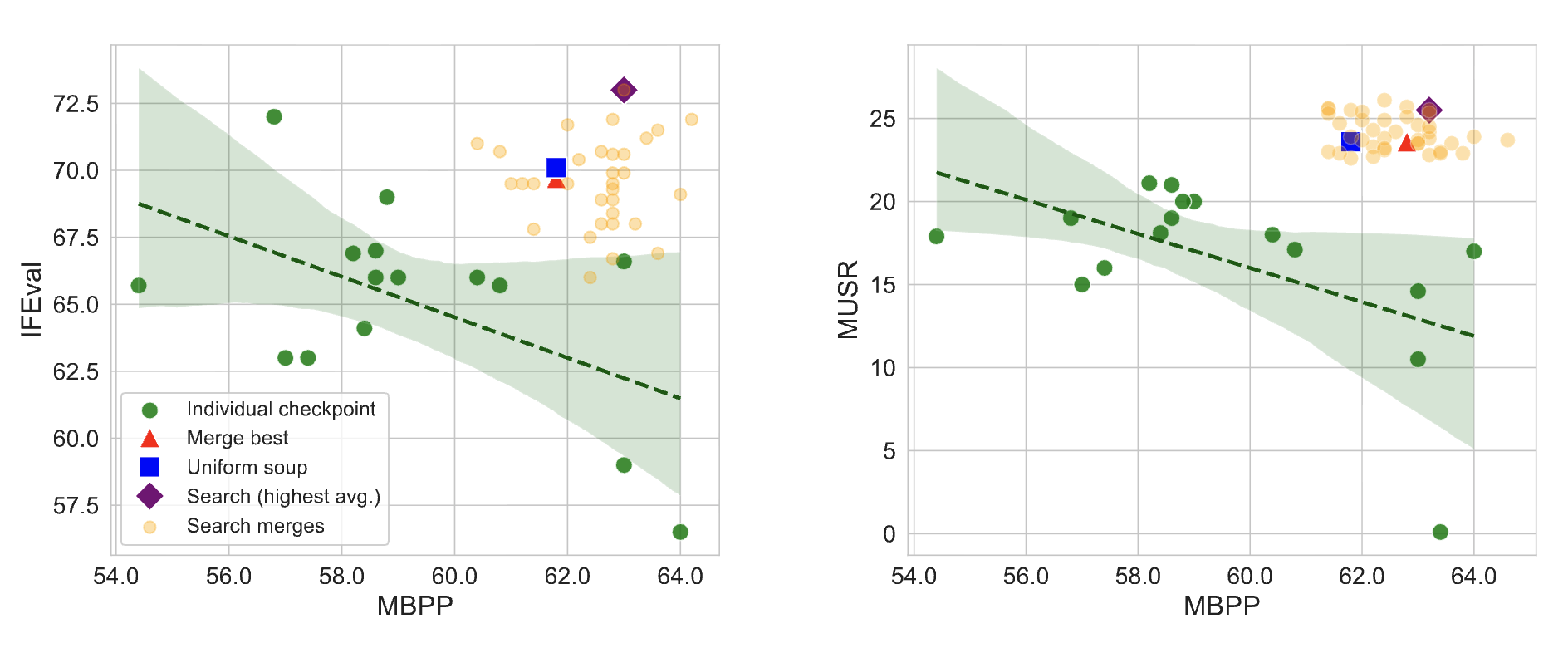

If You Can't Use Them, Recycle Them: Optimizing Merging at Scale Mitigates Performance Tradeoffs

Muhammad Khalifa, Yi-Chern Tan, Arash Ahmadian, Tom Hosking, Honglak Lee, Lu Wang, Ahmet Üstün, Tom Sherborne, Matthias Gallé

arXiv 2024